Let's talk reputable software and application allowlisting (Part 1)

- Kim & Tom

- Mar 12

- 3 min read

A while ago I tweeted that "Signed and Reputable" didn't necessarily equate to "desirable" or "can't be used with malicious intent". A tweet is a tweet, and doesn't allow for a whole lot of room to clarify your point. So here's a blog article describing what I meant by it, or at least the first part of what I meant. Part 2 will be the real gotcha, stay tuned.

What is the Intelligent Security Graph?

Microsoft's Intelligent Security Graph (ISG) is a threat intelligence system that ingests signals from billions of endpoints, cloud services, and Microsoft products to make trust decisions. One of the things it does is classify software as "reputable" — meaning it's widely distributed, commonly seen across the Microsoft ecosystem, and hasn't been flagged as malicious.

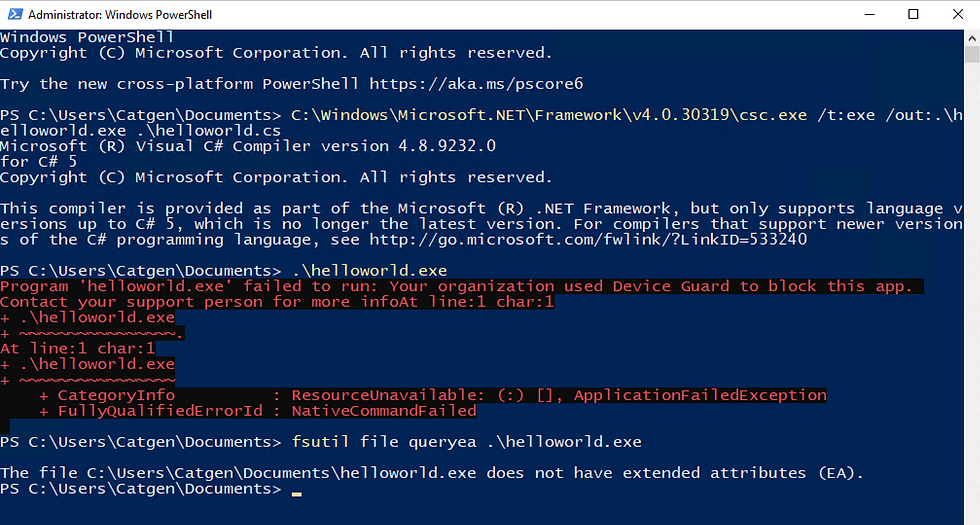

When you configure Windows Defender Application Control (WDAC) aka AppControl for business with an ISG-enable policy, you can allow software that is deemed reputable by the ISG.

On paper, this sounds like a reasonable middle ground between a strict allowlist and no controls at all.

Below are some of the problems I have with it, part 2, teaser, teaser, will explain a separate issue.

Reputable is not the same as appropriate

"Reputable" simply means Microsoft has seen this software a lot and hasn't classified it as malware. That's it. It says nothing about whether that software should be running in your environment.

Take a few examples:

PsExec — Signed by Sysinternals/Microsoft. Seen on millions of endpoints. Absolutely reputable. Also one of the most abused tools in ransomware playbooks, used for lateral movement and remote execution.

AnyDesk / TeamViewer — Legitimately signed, widely distributed, reputable. Also a go-to for threat actors performing hands-on-keyboard access after initial compromise.

Python / Node.js interpreters — Reputable beyond question. Also a perfectly functional malware execution engine if a threat actor drops a script and runs it through a trusted interpreter.

PuTTY — A widely used SSH and Telnet client, signed and reputable. Also a straightforward way for a user or attacker to establish outbound SSH tunnels, bypass network controls, or maintain persistent access to external systems — none of which your ISG-based policy will raise an eyebrow at.

Non-corporate approved browsers — Chrome, Firefox, Brave, Opera — all signed, all reputable, all completely invisible to your proxy policies, DLP controls, and conditional access configurations that are tied to your sanctioned browser. Typically supporting unmanaged browser extensions with all their security challenges. A user running an unsanctioned browser isn't executing malware, but they may be bypassing every web-based and browser management security control you've built.

None of these are malware. To make matters worse, most of them are just portable apps, or apps that can be installed by a regular user without local administrator rights. The ISG won't flag them. An ISG-augmented policy will happily let them run. But in most corporate environments, the question shouldn't be "is this reputable?", it should be "does this have a business reason to run here?"

Where "Signed and Reputable" does have value — and where it doesn't

The ISG-based approach isn't useless. An allowlisting implementation based on ISG is way better than nothing.

But here's the thing people don't talk about enough: it's a shortcut — and one that tends to make the real goal harder to reach, not easier.

Once you've deployed a policy that leans on ISG reputability, you've created an environment where nobody has done the hard work of defining what should run :

No application inventory

no business justification process

no exception workflow.

The organisation feels like it has application control — and that perception is often enough to deprioritize the effort of building a proper allowlist.

Meanwhile, moving away from an ISG-based implementation toward a defined, organization-specific policy is cumbersome. You're not building on a foundation — you're dismantling a comfortable shortcut that people have grown accustomed to, while simultaneously trying to build the thing you should have built in the first place.

The "better than nothing" framing is accurate. The "it'll get us started" framing is where it goes wrong.

The practical takeaway

When someone tells you their application control strategy relies on "Signed and Reputable" software being allowed to run, ask them these questions:

Is PsExec reputable in your environment? Should it run on every endpoint?

Is a Python interpreter reputable? Should every user be able to execute arbitrary Python?

Are remote access tools reputable? Are they all sanctioned?

Is PuTTY reputable in your environment? Should every user be able to establish outbound SSH tunnels to arbitrary destinations?

Are all the browsers on your endpoints running through your security controls?

If the answer to any of these is "well, not everywhere" — then you already agree that reputation alone isn't the right control. You need to define what's appropriate for your environment, not what's common across Microsoft's global telemetry.

"Signed and Reputable" is a useful signal. It's just not a policy.

Comments